High-Speed Interface Data Rates Have Multiplied by Ten, But the Difficulty of SI/PI Simulation Has Multiplied by Much More

-

2026.04.14

In 2003, the data rate for DDR1 was 400 Mbps.

Around 2025, the latest DDR5 specifications have surpassed 8000 Mbps, HBM3E (9.6 Gbps/pin) has achieved large-scale mass production, and PCIe Gen6 (64 GT/s) is entering the commercial deployment phase.

Over two decades, data rates have increased by a factor of twenty.

Engineers working in Signal Integrity (SI) know exactly what this means—it's not just that the signals are running faster; it’s that the legacy simulation methodologies are failing, one by one.

I. The First Failure: Macromodels Can No Longer Keep Up

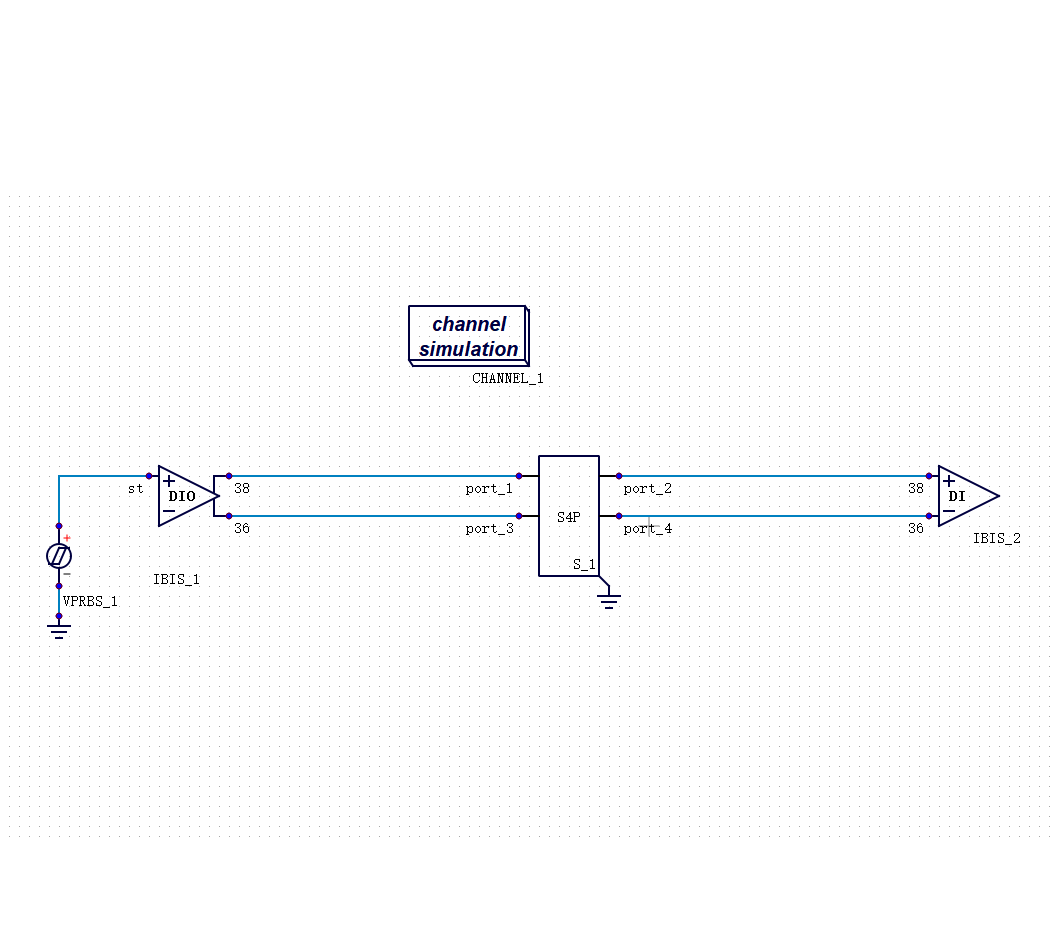

When performing high-speed interface simulation, the IBIS model is an unavoidable tool.

Its logic is straightforward: use a behavioral-level macromodel to describe the I/O characteristics of a chip, enabling channel simulation without needing to know the chip's internal circuit details. This method was perfectly sufficient during the DDR3 and DDR4 eras.

However, entering the data rate regimes of DDR5, HBM3, and PCIe Gen5, problems arise.

A macromodel is an approximate deion of chip behavior. The higher the data rate, the more complex the non-linear characteristics of the chip I/O become, and the larger the approximation error grows. Under extreme operating conditions—high temperature, low voltage, and worst-case PVT corners—this error may already exceed the scope of the design margin itself.

DDR5 introduces a more fundamental issue: it mandatorily implements Equalization. Traditional IBIS macromodels are completely blind to the eye diagram post-equalization—they describe the static drive behavior of the chip I/O, whereas equalization is a dynamic, pattern-dependent algorithmic process. To address this, the industry introduced IBIS-AMI extension models to handle equalization modeling, but AMI remains fundamentally a behavioral approximation and still hits an accuracy ceiling in complex scenarios. This isn't to say AMI is valueless—in hybrid solutions combined with time-domain simulation, it provides functional support for equalization modeling. Its limitation lies in the fact that AMI alone cannot serve as the accuracy baseline for high-speed interface Signoff.

Engineers run simulations that show sufficient margin, but post-silicon testing reveals the margin is inadequate. The root cause often lies right here.

II. The Second Failure: The Accumulation of Errors in Segmented Simulation

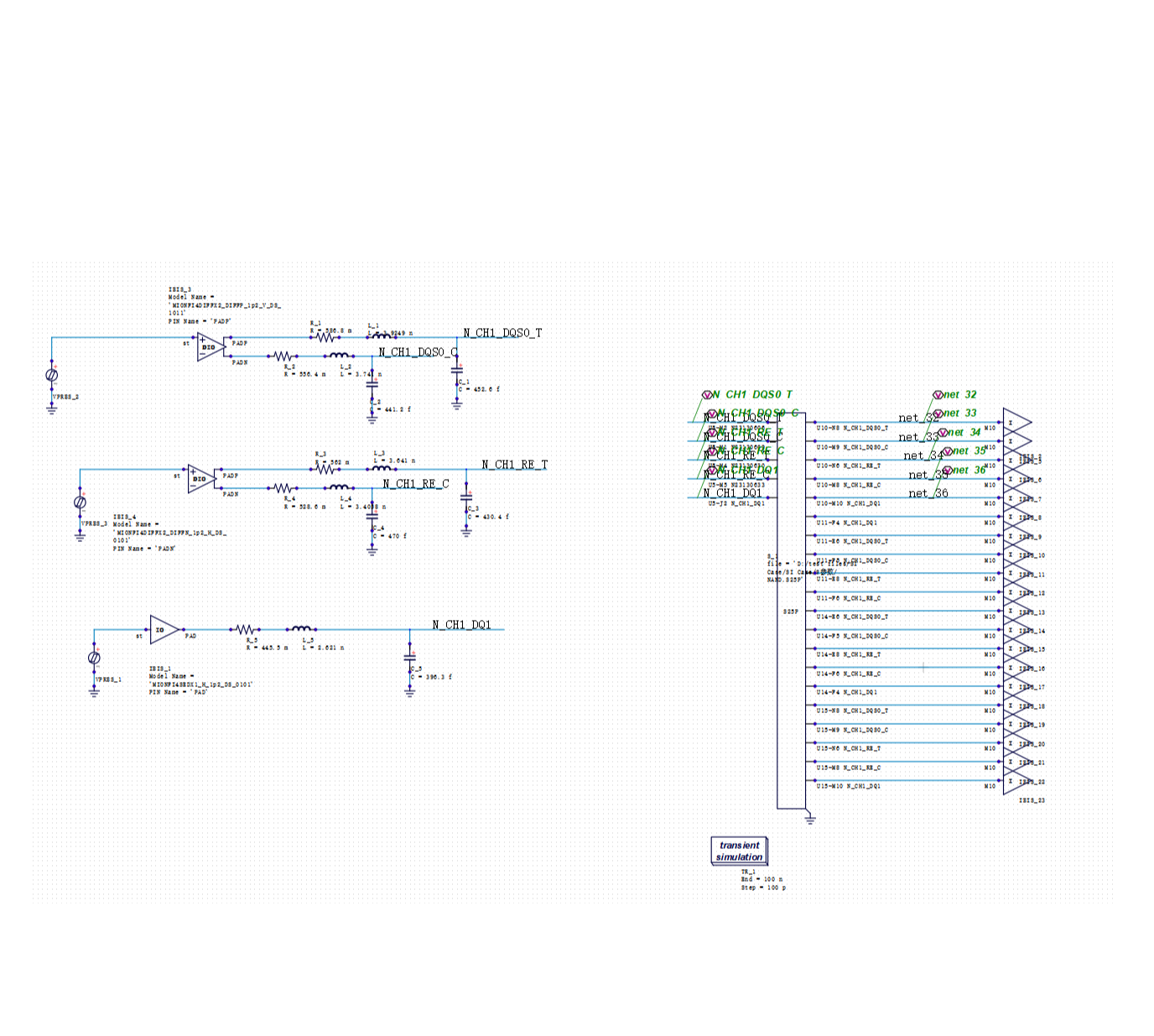

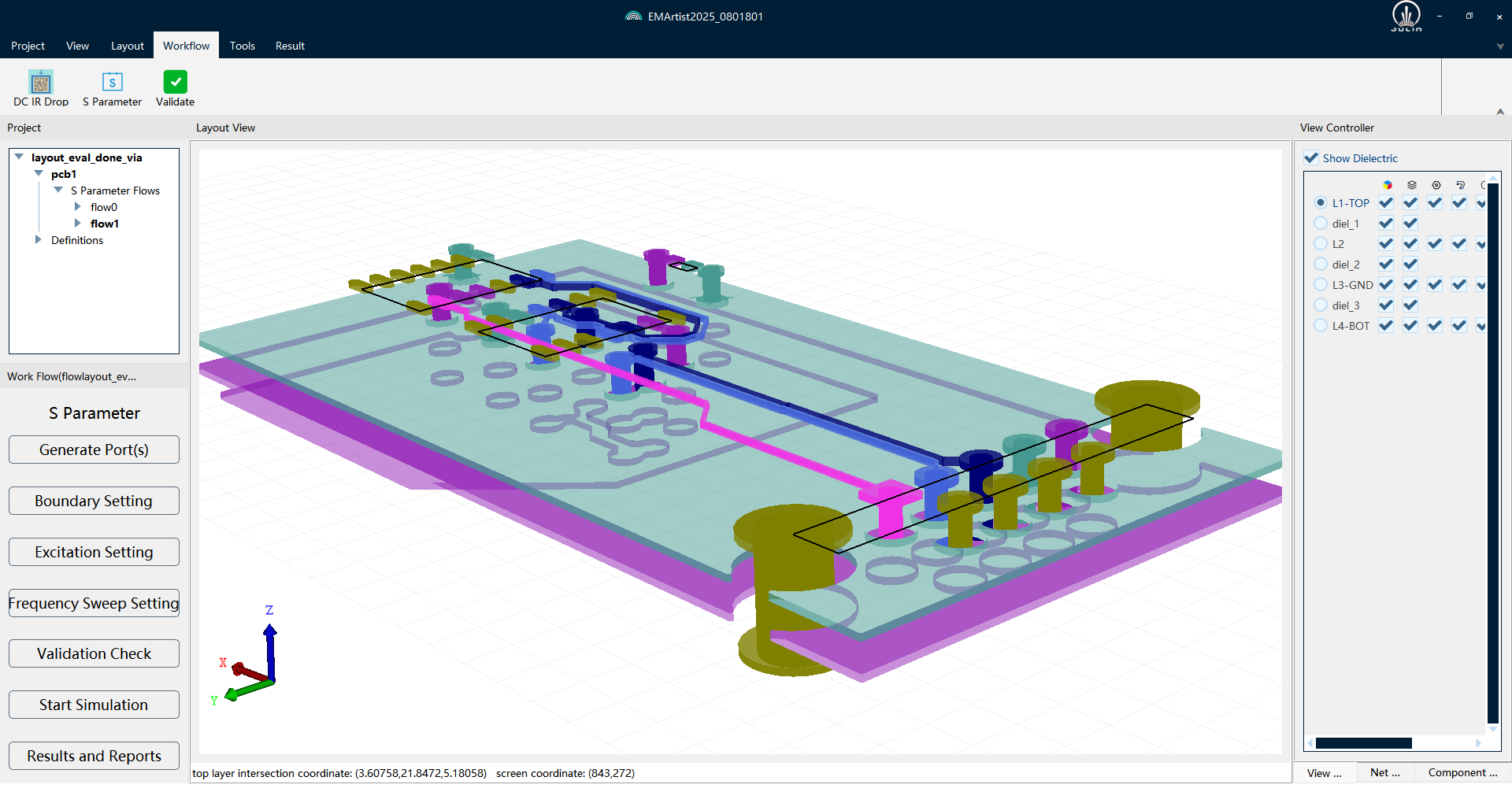

The traditional SI/PI simulation workflow treats the chip, package, and PCB in separate layers: first extract the chip's I/O model, then build a separate parasitic parameter model for the package, then perform board-level transmission line simulation, and finally superimpose the results.

This method worked fine in the low-speed era because the interaction between the layers was minimal; calculating them separately and then combining them yielded an acceptable error.

In high-speed scenarios, this assumption begins to collapse.

The parasitic inductance of the package interacts with the drive capability of the chip I/O; impedance discontinuities on the PCB cause reflections at the package pins, which in turn affect the waveform at the chip end. These are system-level coupling effects, and segmented modeling inherently discards this information.

Each layer "passes" simulation individually, but when combined, they fail to meet timing requirements—this situation has become increasingly common in DDR5 and HBM projects.

III. The Third Failure: Divergence in Simulation Methodologies

Sometimes, the dilemma engineers face isn't that "the tools aren't good enough," but rather "not knowing which methodology to trust."

Take BER (Bit Error Rate) Signoff as an example. There are currently two main routes in the industry:

Statistical Analysis (Channel Simulation): Originally designed for SerDes interfaces and later extended to DDR interfaces. It is fast but relies on convolution/accumulation algorithms, which suffer from decreased accuracy in highly non-linear scenarios.

Transient Simulation: Accuracy is closer to actual physical behavior, but the computational load is massive, and running a complete scenario takes an excruciatingly long time.

Engineers are unsure which to trust, or they try to run both but lack sufficient time.

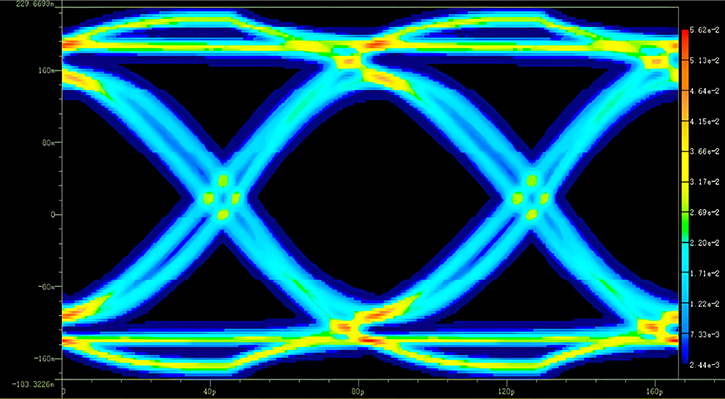

Both methods involve trade-offs, but DDR5 makes this contradiction much sharper—due to the introduction of DFE (Decision Feedback Equalization).

DFE is one of the most critical architectural differences between DDR5 and DDR4. It works by eliminating Inter-Symbol Interference (ISI) in real-time based on historical decisions of previous symbols. This "history-dependent" feedback mechanism breaks a core assumption of statistical analysis: that the signal is stationary and ergodic. Statistical methods use convolution/accumulation to estimate the eye diagram, inherently suffering accuracy loss when processing non-linear equalization like DFE. In contrast, the transient method simulates bit-by-bit, authentically restoring the actual operational process of DFE, but at a tremendously high computational cost.

The conclusions drawn by these two methods can differ significantly in DDR5 scenarios.

This is not the fault of the engineers; it is an industry-wide problem where a consensus on Signoff methodologies for interfaces at DDR5 and above has not yet been reached.

IV. The Fourth Failure: Tool Fragmentation Slows Down the Entire Workflow

To complete a full SI/PI analysis, an engineer often needs to open multiple tools simultaneously:

Using Tool A to run channel simulation, Tool B for transient analysis, and then switching to Tool C to view waveforms and measure eye diagram parameters. Each tool does its specific part well, but data formats, simulation settings, and waveform displays are siloed.

This creates two practical problems:

Efficiency Loss: Repeatedly importing and exporting data between tools, and switching between multiple interfaces while troubleshooting. An analysis that should take a few hours can end up taking a whole day.

Introduction of Errors: Passing data between different tools with inconsistent format conversions and parameter settings naturally introduces additional deviations. Sometimes, engineers think there is a design issue, when it's actually an issue with tool integration.

V. The Cost is Quantifiable

All the failures mentioned above ultimately reflect on the yield rate.

Data shows that in high-speed interface design, the defect rate can nearly double when comparing designs that skip simulation-driven system-level optimization (DOE/RSM) versus those that undergo comprehensive simulation optimization.

And this doesn't even account for the costs of re-spins caused by unreliable simulation conclusions, or the price of delayed project cycles.

VI. An Expanding Gap

DDR5, HBM3, UCIe, PCIe Gen5—this generation of high-speed interface standards has pushed SI/PI simulation to the boundaries of existing methodologies.

Data rates are still climbing. Chiplet packaging has elevated the complexity of inter-chip interconnects to yet another level. Engineers are facing four concurrent dilemmas: insufficient model accuracy, accumulation of errors in segmented simulation, a lack of methodological consensus, and fragmented tool workflows.

This is not a single isolated problem, but rather the systemic pressure facing the entire simulation infrastructure in the high-speed era.

Recommended

-

Selecting SI Simulation Tools: Practical Verification of SIDesigner's 4 Core Capabilities2026.04.29

Selecting SI Simulation Tools: Practical Verification of SIDesigner's 4 Core Capabilities2026.04.29 -

How to Choose DDR5/HBM3 Signal Integrity Simulation Tools? Four Verification Standards2026.04.23

How to Choose DDR5/HBM3 Signal Integrity Simulation Tools? Four Verification Standards2026.04.23 -

PanosSPICE: Establishing the "Golden Foundation" for Chip-Level Simulation2026.03.20

PanosSPICE: Establishing the "Golden Foundation" for Chip-Level Simulation2026.03.20 -

The Dilemma of SPICE Simulation in Complex Chip Design: The Trade-off Between Accuracy and Efficiency2026.03.13

The Dilemma of SPICE Simulation in Complex Chip Design: The Trade-off Between Accuracy and Efficiency2026.03.13