The Dilemma of SPICE Simulation in Complex Chip Design: The Trade-off Between Accuracy and Efficiency

-

2026.03.13

Core Viewpoints at a Glance:

The High-Speed Interface Dilemma: Design teams are currently caught in a paradox between simulation accuracy and efficiency.

The Accuracy-Speed Gap: Traditional True-SPICE provides high fidelity but can take weeks; FastSPICE offers speed but sacrifices precision.

The Fundamental Solution: Full transistor-level physical modeling is the only viable technical path. Reliable system-level verification can only be built upon a foundation of hyper-accurate device models.

I. Core Challenges of SPICE Simulation

In the realm of modern integrated circuit (IC) design, SPICE (Simulation Program with Integrated Circuit Emphasis) remains the gold standard for verifying circuit functionality and performance. However, as chip design complexity experiences exponential growth, traditional SPICE simulation is hitting a wall.

Current high-speed interface designs—such as DDR, PCIe, and UCIe—face continuously escalating transmission rates. Consequently, Signal Integrity (SI) analysis must now account for device counts ranging from millions to tens of millions. Simultaneously, accuracy requirements have reached extreme levels: certain application scenarios now demand a Bit Error Rate (BER) at the 1e-50 level, far surpassing the traditional 1e-12 standard.

This has created an industry-wide dilemma:

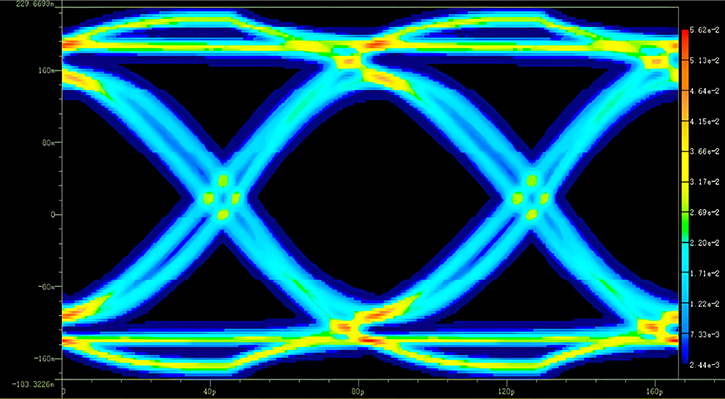

The True-SPICE Bottleneck: While it guarantees Golden-level precision, a complete eye diagram analysis often requires days or even weeks. For a typical high-speed SerDes link, the computational time needed to perform millions of Monte-Carlo statistical analyses can easily exceed the entire project's time window.

The FastSPICE Risk: Acceleration tools like FastSPICE can improve simulation speed by more than 10X. However, the resulting loss in precision often leads to predictive deviations in corner cases. Post-silicon, these deviations can evolve into fatal design flaws.

The Core Contradiction: The trade-off between precision and efficiency has become the primary bottleneck restricting the overall efficiency of modern chip design.

II. Why is SPICE Simulation Becoming Increasingly Difficult?

2.1 Exponential Growth of Circuit Scale

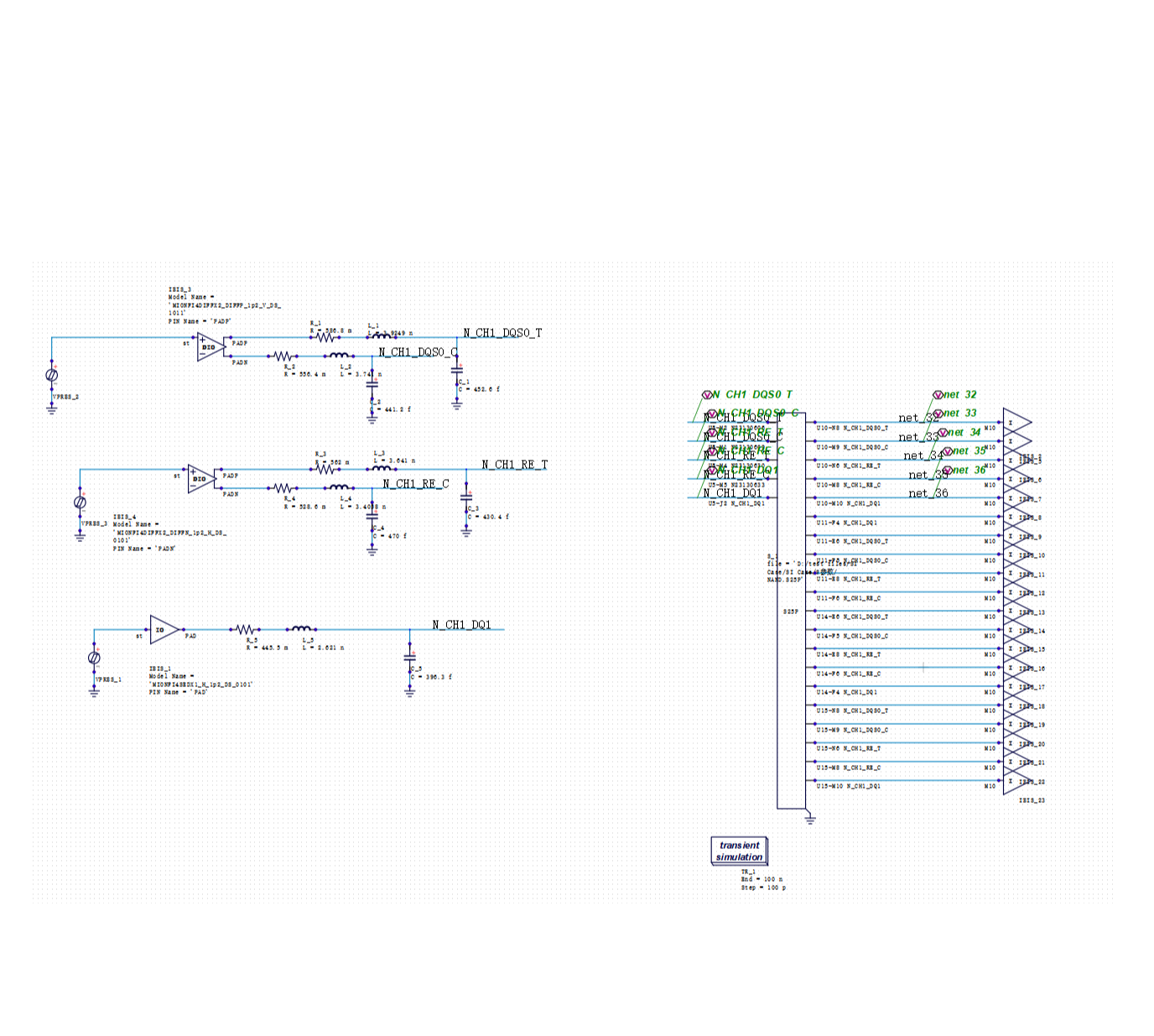

In modern chip design, a full-link simulation of a high-speed SerDes interface can involve millions of transistors. For the Signal Integrity (SI) analysis of a high-speed memory controller, the number of network nodes can even reach tens of millions.

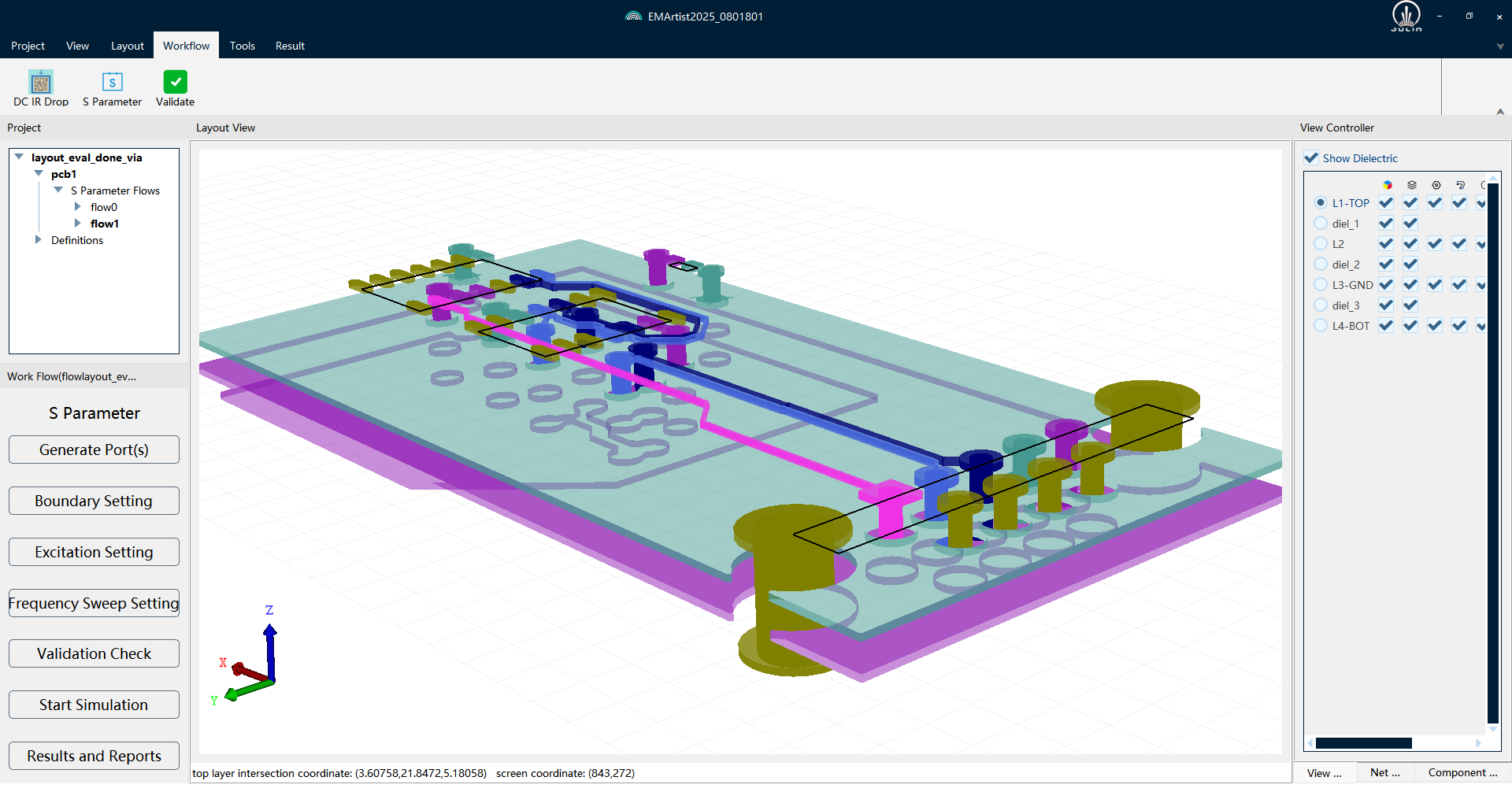

Crucially, in high-speed circuits, every parasitic parameter of a transmission line, every electromagnetic coupling in a via, and every point of impedance discontinuity can significantly impact signal quality. SPICE must accurately model and solve for all these intricacies, causing computational complexity to grow at a geometric rate.

2.2 Extreme Precision Requirements

Bit Error Rate (BER) requirements have evolved from the 1e-12 standard in early designs to 1e-50 in certain current scenarios—an increase of 38 orders of magnitude.

This implies that if traditional transient simulation methods were used, one would theoretically need to simulate the transmission of 1050 bits. Even with the most advanced commercial SPICE tools, completing such a simulation would theoretically take years or even decades.

2.3 Continuously Rising Process Complexity

As technology migrates from planar CMOS to FinFET, and from silicon-based devices to 3rd-generation semiconductors (SiC, GaN), a transistor model at an advanced node may contain hundreds of parameters. These parameters often exhibit complex, non-linear coupling relationships.

Furthermore, Chip-Package-PCB co-design has become the industry norm. Signal Integrity analysis must now account for system-level parasitic effects and electromagnetic (EM) coupling across the entire signal path, further exacerbating simulation complexity.

2.4 Inherent Limitations of Traditional SPICE Architecture

Linear Cost of Time-Domain Transient Analysis: Computational overhead grows linearly with simulation time, making long-term behavior prediction inefficient.

Sample Dependency in Statistical Analysis: Traditional Monte-Carlo methods require millions of independent simulations to achieve statistical significance.

The Precision-Speed Trade-off: Existing acceleration technologies often achieve speed at the expense of localized accuracy.

The Reality: Traditional methodologies have encountered a significant efficiency bottleneck when faced with modern technical demands.

III. Divergence of Industry Technical Routes

In response to this dilemma, the industry has diverged into two mainstream technical routes, yet each is accompanied by significant limitations.

3.1 Route A: FastSPICE—The Speed-Priority Compromise

Core Concept: Differential Treatment. FastSPICE employs simplified models for digital circuits while applying precise SPICE solvers only to critical analog paths.

Technical Advantages: It significantly shortens simulation time, making the rapid iteration of large-scale circuits possible.

Inherent Limitations: Simplified models introduce additional sources of error. In high-speed interface design, switching noise from seemingly minor digital buffers can couple into sensitive analog circuits via the power delivery network (PDN). Simplified models often fail to capture these complex cross-domain coupling effects.

Industry Practice: While FastSPICE performs well during early design iterations, engineers must ultimately return to True-SPICE for the final Signoff verification. This "dual-track" approach increases the complexity of the EDA toolchain.

3.2 Route B: True-SPICE—The High Cost of Guaranteed Accuracy

Application Areas: Critical sectors with stringent reliability requirements, such as automotive electronics, industrial control, and aerospace.

Technical Advantages: Precision is guaranteed, providing a reliable basis for Signoff and mitigating the risk of tape-out failure caused by insufficient accuracy.

Inherent Limitations: Prohibitive time costs. Completing a comprehensive statistical eye diagram analysis for a high-speed interface can take several weeks of continuous operation, even on large-scale server clusters. Every design modification necessitates re-verification, leading to substantial time wastage.

3.3 The Nature of Industry Pain Points

Contradiction between Time Cost and Market Windows: A typical chip design cycle spans 12–18 months, yet high-precision SPICE simulation can consume more than 30% of that total timeframe.

Contradiction between Computing Resources and Project Budgets: Massive computing resources can account for 15–20% of the total project budget—a nearly unbearable burden for small to medium-sized teams.

Contradiction between Accuracy Guarantee and Design Iteration: The lengthy simulation times of high-precision tools limit the number of possible design iterations. However, thorough iteration is often the key to optimizing design quality.

The Core Issue: Traditional technical routes are currently unable to achieve order-of-magnitude improvements in efficiency while maintaining absolute precision.

IV. Potential Directions for Technical Breakthroughs

Amidst seemingly insoluble dilemmas, several innovative technical directions are emerging with the potential for breakthroughs.

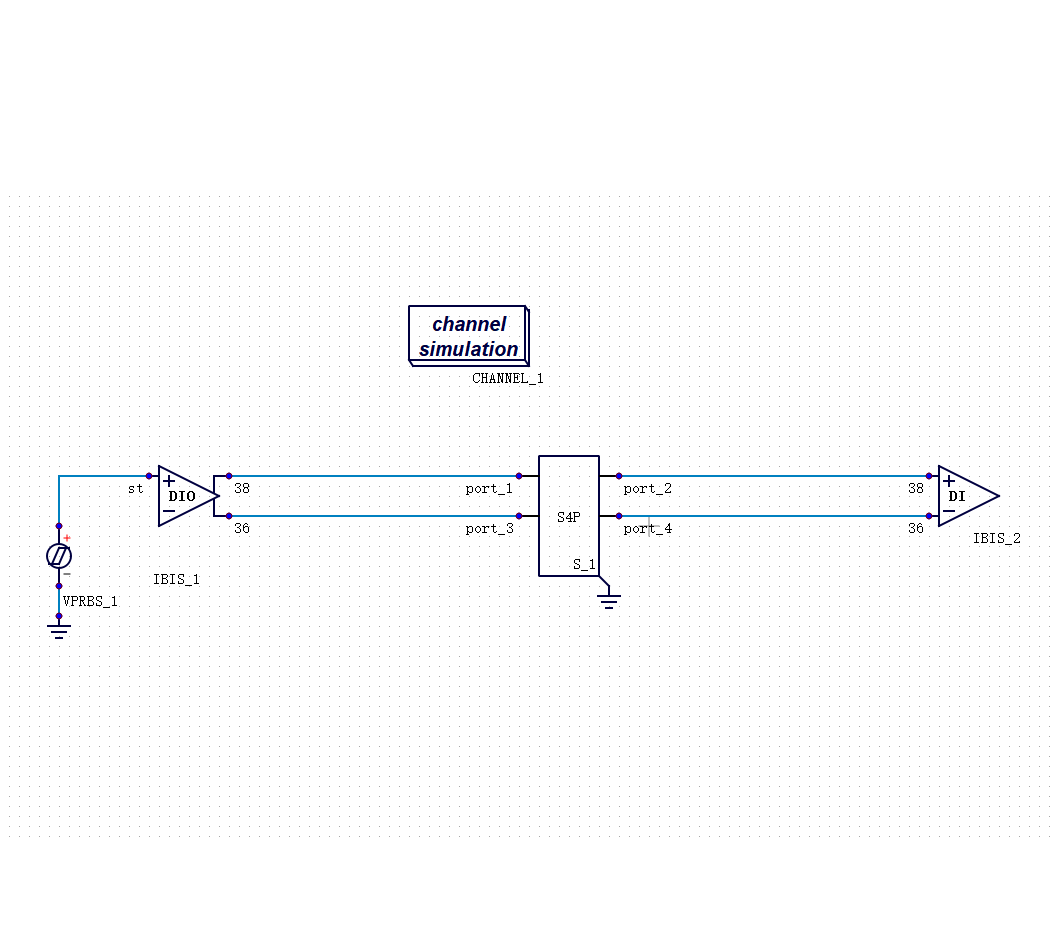

4.1 Paradigm Shift in Statistical Eye Diagram Analysis

Traditional eye diagram analysis is an "exhaustive" method—characterizing eye distribution by simulating massive bit sequences. In contrast, statistical eye analysis technology represents a paradigm shift: instead of gathering results through extensive transient simulations, it directly calculates the statistical distribution of the eye diagram using probabilistic analysis methods.

Technical Principle: It models signal transmission as a stochastic process, comprehensively considering all noise sources, jitter sources, and their correlations to directly derive the probability distribution function (PDF) of the eye diagram through mathematical deduction.

Efficiency Enhancement Mechanisms:

Comprehensive Coverage: A single analysis covers the statistical range equivalent to millions of simulations in traditional methods.

Time Compression: Analysis time is compressed from weeks down to mere hours.

Performance Leap: Achieves a million-fold improvement in efficiency.

Accuracy Performance: By building analysis models based on probability theory frameworks, there is a trade-off in precision compared to traditional exhaustive Monte-Carlo methods. However, the error is controllable and meets engineering requirements in the vast majority of design iteration scenarios.

Key Breakthrough: This is not simple acceleration, but a fundamental paradigm shift at the algorithmic level.

4.2 Additional Technical Directions

Adaptive Solving Strategies: This approach dynamically adjusts solving strategies based on the complexity of circuit behavior. By utilizing larger time steps in steady-state signal regions and finer steps in signal transition regions, the goal is to optimize intermediate computational processes while ensuring the precision of the final output.

Parallel Computing Architectures: By fully leveraging multi-core CPUs and GPUs, tasks such as Monte-Carlo analysis and parameter sweeps are decomposed into hundreds of computing cores for simultaneous execution. When combined with optimized statistical methods, parallel efficiency can approach a linear acceleration ratio.

4.3 Technical Significance: Redefining the Boundaries of Accuracy and Efficiency

Significant Increase in Statistical Coverage: Engineers are no longer restricted to sampling typical corners. Instead, they can efficiently explore a much broader parameter space to uncover latent issues that traditional methods might never reach.

Transformation of Design Iteration Models: As simulation times shrink from days to hours, designers can perform significantly more optimization rounds. This allows for the discovery of optimization opportunities that were previously obscured by time constraints.

A Strategic Balance Between Efficiency and Accuracy: Under current technical conditions, the statistical eye diagram method provides an engineering pathway that balances high efficiency with acceptable accuracy. This reduces the switching costs associated with "dual-track" toolchains. However, the deep-seated contradiction between accuracy and efficiency remains a primary target for ongoing industry innovation.

Conclusion: A New Era of Technical Evolution

SPICE simulation technology has evolved for nearly 50 years, growing from an open-source project at UC Berkeley into the critical infrastructure supporting the global semiconductor industry. Today, chip design is undergoing a new round of technological transformation.

The core of next-generation SPICE technology lies in a paradigm shift at the algorithmic level:

From exhaustive sampling statistics → Probabilistic analysis based on mathematical models.

From single-precision global solving → Adaptive dynamic optimization.

From serial computing architectures → Deeply parallel heterogeneous computing.

These explorations are continuously pushing the boundaries of accuracy and efficiency. The statistical eye diagram method represents a current engineering trade-off—exchanging controllable precision for order-of-magnitude efficiency gains. This enables design teams to complete more thorough iteration and verification within increasingly tight market windows.

Nevertheless, achieving "extreme efficiency without sacrificing accuracy" remains the core, unresolved challenge in the field of SPICE simulation. As chips evolve toward higher performance, lower power consumption, and more complex systems, the demand for higher-precision and higher-efficiency simulation methods will only grow. Deep breakthroughs at the algorithmic level remain the most anticipated direction for the future.

Looking Ahead: The true unification of accuracy and efficiency is not a destination already reached, but a continuous technological conquest.

Technical Notes:

The statistical eye diagram analysis technology described herein is based on rigorous probability theory and stochastic process models.

Error Calculation Method:

Δ = |measured - reference| / reference × 100%

Recommended

-

Selecting SI Simulation Tools: Practical Verification of SIDesigner's 4 Core Capabilities2026.04.29

Selecting SI Simulation Tools: Practical Verification of SIDesigner's 4 Core Capabilities2026.04.29 -

How to Choose DDR5/HBM3 Signal Integrity Simulation Tools? Four Verification Standards2026.04.23

How to Choose DDR5/HBM3 Signal Integrity Simulation Tools? Four Verification Standards2026.04.23 -

PanosSPICE: Establishing the "Golden Foundation" for Chip-Level Simulation2026.03.20

PanosSPICE: Establishing the "Golden Foundation" for Chip-Level Simulation2026.03.20 -

The Dilemma of SPICE Simulation in Complex Chip Design: The Trade-off Between Accuracy and Efficiency2026.03.13

The Dilemma of SPICE Simulation in Complex Chip Design: The Trade-off Between Accuracy and Efficiency2026.03.13