IIC Shanghai | High-Speed Interface SI Signoff: Precision Limitations of Statistical Domain Algorithms and Path Reconstruction

-

2026.04.02

Engineers who have performed PCIe Gen6/7 or DDR5 Signoff have mostly encountered the same dilemma: there is no universally acknowledged standard workflow. Some teams follow JEDEC specifications, while others rely on long-term engineering experience for margin estimation. Overall, the industry remains in a stage of "relying on individual experience."

This is not because tool vendors have failed to implement it well, nor is it due to a lack of effort in the EDA industry—the root cause lies in the inherent algorithmic limitations of the current mainstream methodologies themselves. This problem is continuously amplified as next-generation high-speed interfaces evolve.

At IIC Shanghai 2026, Dong Jialong, Director of Technical Support at Julin-tech, delivered a keynote speech titled "The Challenges of High-Speed Interface SI Signoff Simulation to SPICE." From the perspective of an EDA tool provider and SPICE algorithms, he systematically dismantled the root causes of this dilemma and shared directional insights.

Background: The Three Evolutions of SPICE and the Rise of the Statistical Domain

The developmental history of SPICE is a history of trading efficiency for engineering feasibility. Roughly divided by 20-year intervals, it has undergone three critical leaps.

In the 1970s, UC Berkeley released the open-source SPICE, which accurately solved the current and voltage states of each circuit node using Kirchhoff's laws and the Newton-Raphson iterative method. With its extremely high precision, it quickly became an indispensable foundational tool for IC design. By the 1990s, as circuit scales expanded to hundreds of thousands or even millions of transistors, the simulation time required by True-SPICE extended from days to months, which was engineeringly unacceptable. Consequently, FastSPICE emerged, trading local approximations for orders of magnitude in speed improvements, thereby solving the scale bottleneck with acceptable precision loss.

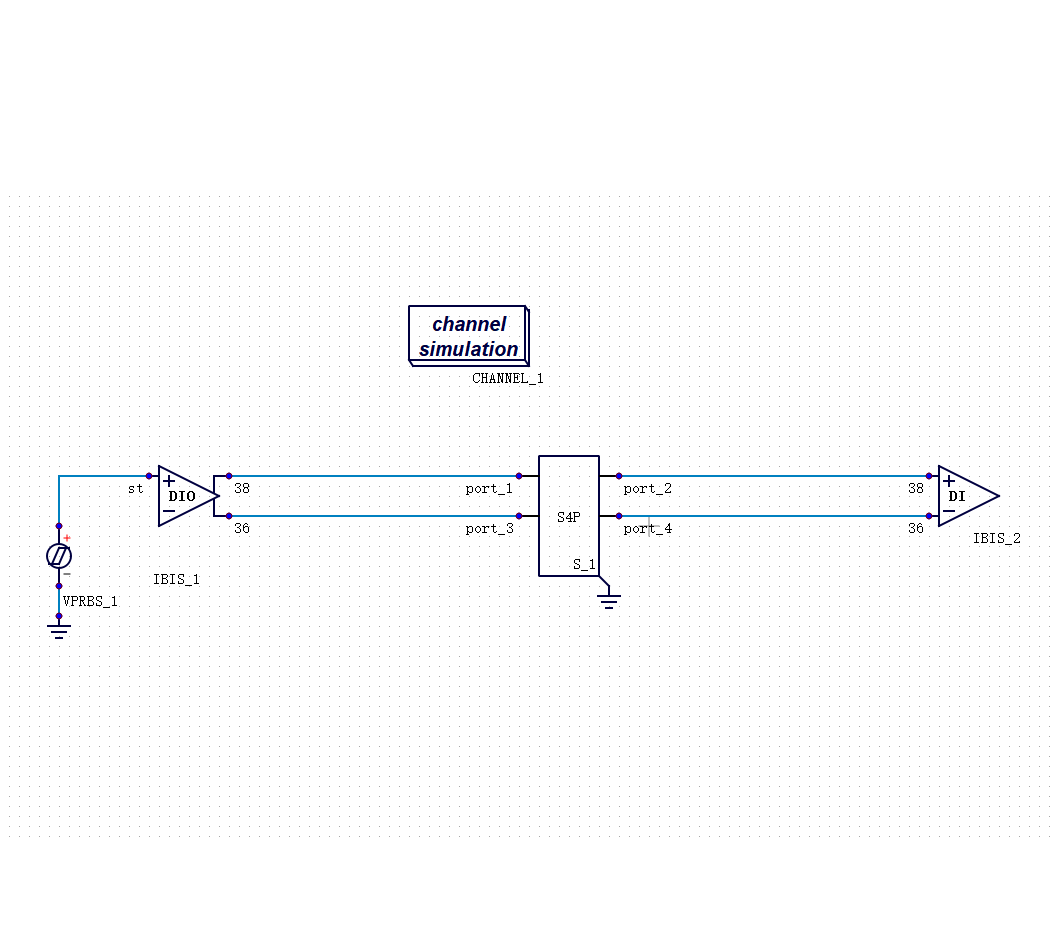

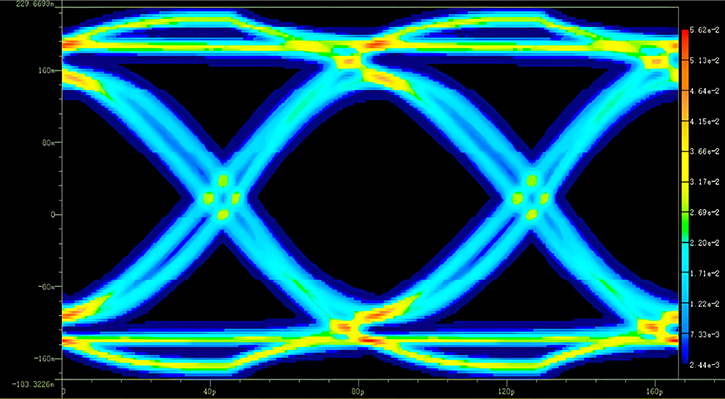

Entering the 2010s, data rates leaped into the Gbps tier, and Bit Error Rate (BER) became the core metric for Signoff, requiring verification to extremely low error probabilities of 1e-12 or even 1e-16. This theoretically meant simulating bits on the magnitude of 10¹⁷, which would take even FastSPICE hundreds of years—the full transient methodology completely failed here. Thus, statistical domain algorithms (Statistical Eye) were introduced as a natural extension of SPICE: using the step response obtained from SPICE simulations as "raw material," the eye diagram probability distribution and margin are directly calculated in the statistical domain. Combined with IBIS behavioral models, this fundamentally bypassed the time bottleneck of BER simulation.

These three paradigms do not replace one another; rather, each occupies an irreplaceable ecological niche: True-SPICE is the precision baseline, FastSPICE is the engineering choice for large-scale simulation, and Statistical Eye is the efficiency solution for BER verification. They coexist rather than iteratively replace one another.

The Dilemma: Structural Cracks in Existing Methodologies

Understanding the evolutionary logic of SPICE helps us comprehend the origins of the dilemma facing SI Signoff today.

Currently, SI simulation for Signoff is roughly divided into two paths: simulation with Jitter and simulation without Jitter.

The jitter-free approach relies on vendors providing Jitter specifications in advance. Engineers correct the margin based on these specifications, requiring the simulation to achieve the corresponding eye height and width. The problem with this method is that the credibility of the Jitter values provided by the vendor is difficult to independently verify—an SoC often needs to be paired with various DRAMs, and the behavior of different combinations cannot be entirely consistent. Furthermore, vendors tend to provide conservative estimates to avoid liability, which actually consumes a larger portion of the design margin.

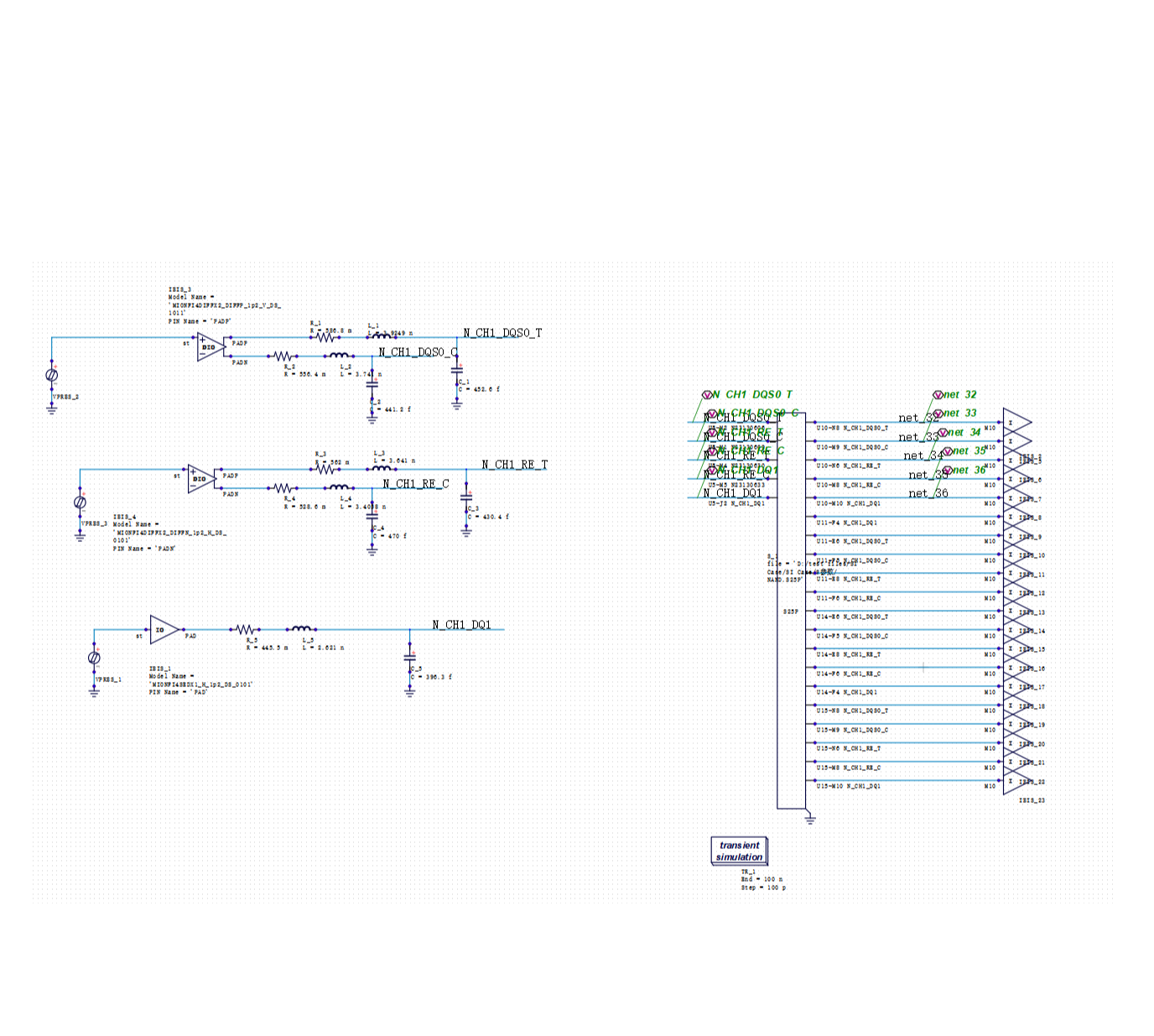

Simulation with Jitter faces another challenge: how to model the Jitter itself. Channels have an amplification effect on Jitter, and the statistical characteristics of Random Jitter (RJ) require a massive number of bits to manifest—if the simulated bit count is too small, the results are distorted; if the bit count is too large, the simulation time becomes unacceptable. This contradiction has yet to find a satisfactory solution.

Statistical domain algorithms (Channel Simulation) themselves also have inherent limitations. The entire set of algorithms is built upon the mathematical assumption of linear superposition, whereas real-circuit interferences like crosstalk and power noise are fundamentally non-linear and cannot be safely linearized. Subsequent algorithms like MER have offered improvements, but precision flaws are rooted at the algorithmic base and cannot be fundamentally eliminated. This issue is not a matter of the implementation level of EDA tool vendors, but an innate constraint of the algorithmic framework itself.

As for Bit-by-Bit simulation, the biggest challenge lies in extrapolation accuracy: when the simulated bit count is limited, mathematical models must be used to extrapolate to the target BER. Currently, the industry widely adopts the Dual-Dirac model, but whether this assumption is universally applicable remains highly questionable.

In summary, Channel Simulation offers usable speeds and comprehensive functional coverage, but suffers from innate algorithmic precision flaws; Full Transient boasts impeccable accuracy, but its efficiency and functional flexibility fail to meet engineering demands. Both paths have their Achilles' heels, yet Signoff precisely requires both. It is for this reason that high-speed interfaces like DDR5 have not yet formed a universally recognized unified Signoff standard in the industry. Large manufacturers define their own sets based on accumulated experience, while small to mid-sized teams are often forced to compromise between precision and feasibility.

Research Foundation: From True-SPICE to System-Level SI Simulation

Before discussing the above issues, it is necessary to explain the research and engineering practices Julin-tech has undertaken across various SPICE levels—this is the prerequisite that validates the subsequent discussions.

PanosSPICE is Julin-tech's self-developed True-SPICE simulation engine, integrating mainstream device models such as BSIM3/4, PSP, BSIMCMG, and VBIC. It also features the Level 90/91 physical device models for 3rd-gen semiconductors (GaN and SiC), jointly developed with Southeast University, filling the modeling void in the emerging power device simulation field. In terms of simulation accuracy, PanosSPICE has passed independent validation by multiple top-tier clients, being recognized as a Signoff-grade simulation tool that achieves the Golden standard in analog/mixed-signal IC design and IP verification scenarios.

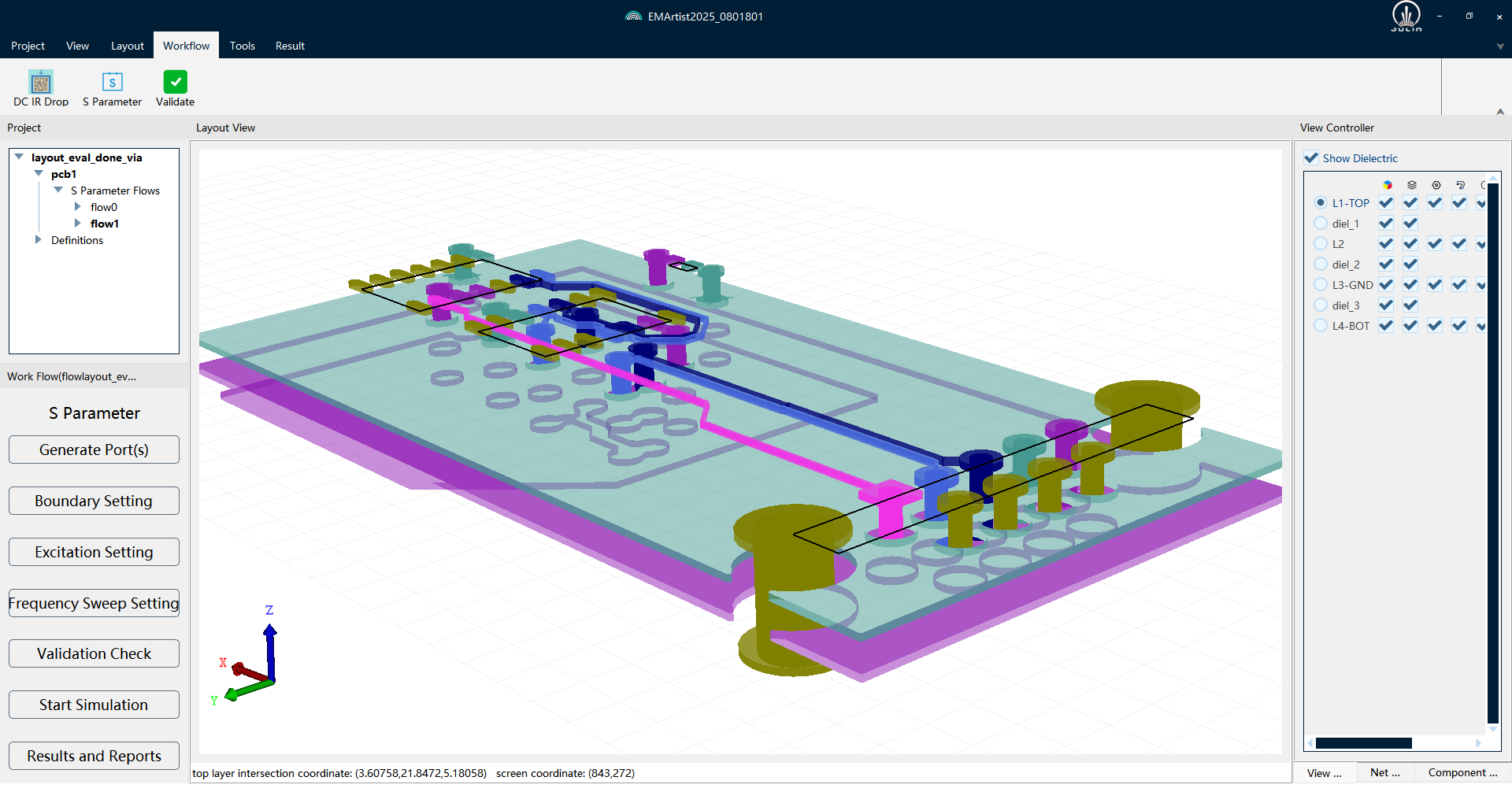

SIDesigner represents Julin-tech's engineering practice achievements in system-level SI/PI simulation—a one-stop SI/PI simulation platform covering all core scenarios of industry-mainstream SI/PI simulation tools, with both transient simulation and Statistical Eye accuracy reaching the Golden level. The platform also encompasses several engineering value-added functions co-developed with customers: DFQ (multivariate design space optimization based on DOE+RSM+ANOVA), BERC (BER Contour prediction merging time-domain and channel simulation, covering mainstream high-speed parallel interfaces like DDR4/5, GDDRx, and UCIe), and RS-Code simulation (evaluating the error-correction efficacy of RSFEC in actual channels).

This demonstrates a fact: from the True-SPICE engine to statistical domain algorithms, and onto system-level SI/PI full-link simulation, Julin-tech possesses continuous R&D investment and engineering validation accumulation across all SPICE algorithmic levels. It is precisely built upon this foundation that we believe we are equipped to conduct further research and exploration into the challenges currently facing high-speed interface Signoff.

Could the Answer Lie in Another Direction?

Bearing the above understanding in mind, we have been pondering a question:

FastSPICE represents a compromise between True-SPICE precision and Statistical Eye speed, but fundamentally, it still leans towards True-SPICE—it is, after all, a transistor-level transient simulation. So, is it possible for a "reverse compromise" to exist—equally a trade-off between the two, but this time leaning towards the statistical domain side?

If the fundamental issue of Channel Simulation lies in the precision ceiling imposed by linear assumptions, then a possible line of thought is: to shift our focus back to transient simulation, but utilize it in a different manner.

Instead of running the full volume of BER bits and pursuing extreme precision, we could simulate a batch of representative worst-case patterns—patterns that are long and typical enough to reflect the system's non-linear behavior and worst-case scenarios. During this process, if equalization algorithms or even AMI simulations could be flexibly introduced, it would break through the functional flexibility limitations of traditional SPICE workflows. Finally, statistical analysis is performed on this batch of results to estimate the BER and eye diagram margins.

Such a workflow would not be as fast as traditional Channel Simulation, but it might yield results within a few hours; in terms of precision, it would not be as complete as Full Transient, but it holds the promise of providing more reliable figures than pure statistical domain methods, grounded on actual transistor-level simulations.

This is merely a directional reflection, and the problems far outnumber the answers. Whether it can be achieved, and to what extent, requires further validation. However, we believe this direction is worthy of serious exploration.

Conclusion

As high-speed interface standards such as DDR5, HBM, and UCIe continue to evolve, and as AI chips continuously elevate the demands for system-level simulation precision, the accuracy threshold for Signoff workflows will only climb higher, and the structural contradiction between precision and efficiency will become increasingly prominent. How to complete genuinely trustworthy simulation verification within an engineeringly feasible time window is a challenge the entire industry must squarely face.

Looking to the future, Julin-tech will consistently uphold the mission of "Precise Simulation, Empowering the Future." We will continue to deeply cultivate "circuit" and "electromagnetic" simulation technologies, closely aligning with frontier industry demands. By maintaining profound collaboration with strategic clients and supply chain partners, we will continuously forge and launch new industry benchmark products.

Recommended

-

AEIF 2026: Julin Tech’s Beijie Qian on Why "Simulation Pass" Does Not Equal "Mass Production Yield"2026.05.22

AEIF 2026: Julin Tech’s Beijie Qian on Why "Simulation Pass" Does Not Equal "Mass Production Yield"2026.05.22 -

IIC Shanghai | High-Speed Interface SI Signoff: Precision Limitations of Statistical Domain Algorithms and Path Reconstruction2026.04.02

IIC Shanghai | High-Speed Interface SI Signoff: Precision Limitations of Statistical Domain Algorithms and Path Reconstruction2026.04.02 -

Julin Technology Showcases Unified CPS SI/PI Simulation Platform at ICCAD2025.12.02

Julin Technology Showcases Unified CPS SI/PI Simulation Platform at ICCAD2025.12.02 -

Jiaxin Sun , Founder of Julin: Empowering High-Fidelity System Design with SIDesigner, a Golden-Level Precision SI/PI Sign-off Platform2025.12.02

Jiaxin Sun , Founder of Julin: Empowering High-Fidelity System Design with SIDesigner, a Golden-Level Precision SI/PI Sign-off Platform2025.12.02